Opster Team

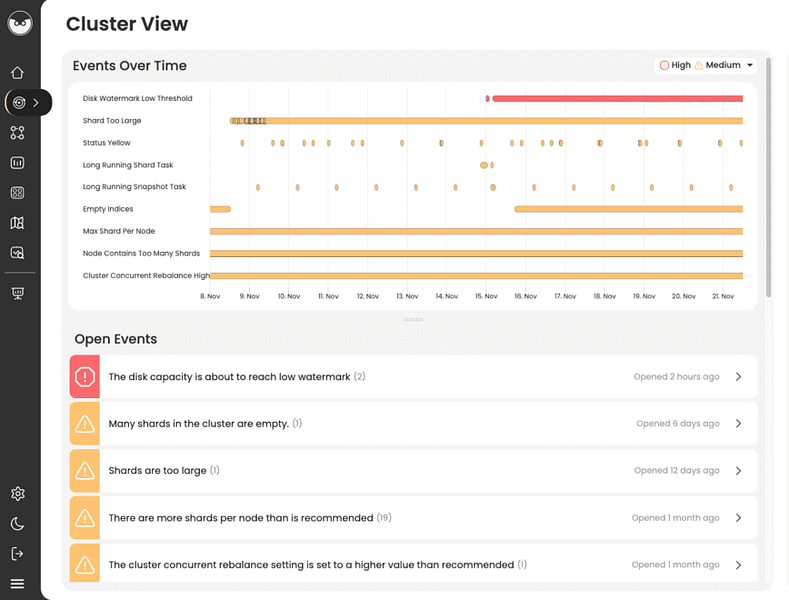

Crossing high disk watermarks can be avoided if detected earlier. Before you read this guide, we strongly recommend you run the Elasticsearch Error Check-Up which detects issues in ES that cause ES errors and specifically problems that causes disk space to run out quickly.The tool can prevent flood disk watermark [95%] from being exceeded from occuring again. It’s a free tool that requires no installation and takes 2 minutes to complete. You can run the Check-Up here.

Quick summary

This error is caused by low disk space on a data node. As a preventive measure, Elasticsearch throws this log message and takes some measures as explained further.

To pinpoint how to resolve issues causing flood stage disk watermark [95%] to be breached, run Opster’s free Elasticsearch Health Check-Up. The tool has several checks on disk watermarks and can provide actionable recommendations on how to resolve and prevent this from occurring (even without increasing disk space).

Explanation

Elasticsearch considers the available disk space before deciding whether to allocate new shards, relocate shards away or block all index write operations on a data node based on a different threshold of this error. This is because Elasticsearch indices consist of different shards which are persisted on data nodes and low disk space can cause issues.

Relevant settings related to log:

cluster.routing.allocation.disk.watermark – There are three thresholds: low, high, and flood_stage. These can be changed dynamically, accepting absolute values as well as percentage values. Threshold can be specified both as percentage and byte values, but the former is more flexible and easier to maintain (in case different nodes have different disk sizes, like in hot/warm deployments).

Permanent fixes

- Delete unused indices.

- Attach external disk or increase the disk used by the data node.

- Manually move shards away from the node using cluster reroute API.

- Reduce replicas count to 1 (if replicas > 1).

- Add new data nodes.

Temporary hacks/fixes

1. Change the settings values to a higher threshold by dynamically updating the settings using update cluster API:

PUT _cluster/settings

{

"transient": {

"cluster.routing.allocation.disk.watermark.low": "100gb",

"cluster.routing.allocation.disk.watermark.high": "50gb",

"cluster.routing.allocation.disk.watermark.flood_stage": "10gb",

"cluster.info.update.interval": "1m"

}

}2. Disable disk check by hitting below cluster update API:

PUT /_cluster/settings

{

"transient": {

"cluster.routing.allocation.disk.threshold_enabled": false

}

}3. Even after all these fixes, Elasticsearch won’t remove the write block on indices. In order to achieve that, the following API needs to be hit:

PUT _all/_settings

{

"index.blocks.read_only_allow_delete": null

}Overview

There are various “watermark” thresholds on your Elasticsearch cluster. As the disk fills up on a node, the first threshold to be crossed will be the “low disk watermark”. The second threshold will then be the “high disk watermark threshold”. Finally, the “disk flood stage” will be reached. Once this threshold is passed, the cluster will then block writing to ALL indices that have one shard (primary or replica) on the node which has passed the watermark. Reads (searches) will still be possible.

How to resolve the issue

Passing this threshold will cause loss of data in your application and you should not delay in taking action. Here are possible actions you can take to resolve this issue:

- Delete old indices

- Remove documents from existing indices

- Increase disk space on the node

- Add new data nodes to the cluster

Although you may be reluctant to delete data, in a logging system it is often better to delete old indices (which you may be able to restore from a snapshot later if available) than to lose new data. However, this decision will depend upon the architecture of your system and the queueing mechanisms you have available.

Check the disk space on each node

You can see the space you have available on each node by running:

GET _nodes/stats/fs

Check if the cluster is rebalancing

If the high level watermark has been passed, then Elasticsearch should start rebalancing to other nodes which are still below the low watermark. You can check to see if any rebalancing is going on by calling:

GET _cluster/health/

If you think that your cluster should be rebalancing shards to other nodes but it is not, there are probably some other cluster allocation rules which are preventing this from happening. The most likely causes are:

- The other nodes are already above the low disk watermark

- There are cluster allocation rules which govern the distribution of shards between nodes and conflict with the rebalancing requirements. (eg. zone awareness allocation)

- There are already too many rebalancing operations in progress

- The other nodes already contain replicas of the shards that could be rebalanced

Check the cluster settings

You can see the settings you have applied with this command:

GET _cluster/settings

If they are not appropriate, you can modify them using a command such as below:

PUT _cluster/settings

{

"transient": {

"cluster.routing.allocation.disk.watermark.low": "85%",

"cluster.routing.allocation.disk.watermark.high": "90%",

"cluster.routing.allocation.disk.watermark.flood_stage": "95%",

"cluster.info.update.interval": "1m"

}

}How to prevent reaching this stage

There are various mechanisms that allow you to automatically delete stale data.

How to automatically delete stale data:

- Apply ILM (Index Lifecycle Management)

Using ILM you can get Elasticsearch to automatically delete an index when your current index size reaches a given age.

- Use date based indices

If your application uses date based indices, then it is easy to delete old indices using a script or a tool such as Elasticsearch curator.

- Use snapshots to store data offline

It may be appropriate to store snapshotted data offline and restore it in the event that the archived data needs to be reviewed or studied.

- Automate / simplify process to add new data nodes

Use automation tools such as terraform to automate the addition of new nodes to the cluster. If this is not possible, at the very least ensure you have a clearly documented process to create new nodes, add TLS certificates and configuration and bring them into the Elasticsearch cluster in a short and predictable time frame.

Log Context

Log “Flood stage disk watermark [{}] exceeded on {}; all indices on this node will be marked read-only” classname is DiskThresholdMonitor.java.

We extracted the following from Elasticsearch source code for those seeking an in-depth context :

* Warn about the given disk usage if the low or high watermark has been passed

*/

private void warnAboutDiskIfNeeded(DiskUsage usage) {

// Check absolute disk values

if (usage.getFreeBytes()

Find & fix Elasticsearch problems

Opster AutoOps diagnoses & fixes issues in Elasticsearch based on analyzing hundreds of metrics.

Fix Your Cluster IssuesConnect in under 2 minutes

Jose Rafaelly

Head of System Engineering at Everymundo